Windows 7 is still very much alive in the enterprise, regardless if

today is the last day Microsoft officially supports the legacy operating

system. Announced in September 2019, Windows Virtual Desktop allows customers

to run a fully managed VDI environment from Azure. The underlying technology is

similar to Remote Desktop Service we have had in Windows Server since 2012 but

the new Azure service abstracts all of the actual components which make it

work. In other words you no longer have to manage RD Session Hosts etc with

WVD.

Customers who have not yet managed to migrate away from Windows 7 are effectively exposed as new security updates will no longer be released for

Windows 7 (unless you have a side agreement with Microsoft). However, when WVD

was announced it was made clear that Windows 7 as an operating system would be

supported in WVD deployments. In addition to this Microsoft are providing free

Extended Security Updates (ESU) to customers running Windows 7 as part of their

WVD deployment.

Although it is very straight forward to get a Windows Virtual Desktop

deployment up and going a few steps are required if you would like you WVD Host

Pools to spin out VM's running Windows 7. By default, it is only possible to

create Host Pools with later operating systems such as Windows 10 Enterprise

Multi-Session.

The process to make Windows 7 available to your WVD users is straight

forward. You must create a customer "managed" image in Azure to be

used as a reference template when creating a new WVD Host Pool.

The easiest way to do this is to deploy a new Windows 7 VM directly

into Azure, install your apps and do any customisation, then convert it to an

image. It is possible to do something similar if for whatever reason this won't

work for you. The process would be to build a reference Windows 7 image on

premise, probably on Hyper-V and run through the steps outlined in this guide https://docs.microsoft.com/en-us/azure/virtual-desktop/set-up-customize-master-image

Step 1: Create a new Windows 7 VM

This is very simple, use the poral to provision a new Virtual Machine,

selecting Windows 7 Enterprise as the source Image.

Windows 7 Enterprise

is not in the quick drop list of operating systems, if you search for it though

you will find that there is a single image available.

Step 2: Install

Apps and Make Customisations

Once the VM has

deployed, login to it and install any applications, or make any customisations.

It is worth noting that some applications need further configuration to make

them user-ready at first launch, this would be addressed on a per-application

basis.

Step 3: Run SysPrep

to Generalise the VM

The SysPrep tool

has been around in Windows since before WDS, I think it first appeared in

Windows Server 2003 with the introduction of Remote Installation Service (RIS).

Anyway it has not changed since, it is a tool used to generalise (strip any

machine specific metadata away from a Windows installation) to aid Windows

imaging. If you fail to SysPrep you VM images you will have nothing but boot

problems and instability.

Open an elevated

Command Prompt and cd

C:\Windows\System32\sysprep

Select the options

shown below Enter System Out-of-Box

Experience (OOBE) and ensure Generalize

is ticked. You also want to ensure the VM is powered off after this process has

completed, if not it will boot and begin detecting all the hardware and run the

OOBE process.

The SysPrep process

should complete in a few minutes and leave you with a clean, generalised VM

ready to be converted into an image.

Once the process

has completed you will see the VM has entered the Stopped (Deallocated) state

from the Azure Portal.

Step 4: Convert

Windows 7 Image to Azure Image

Once we have the

template ready click into the Virtual Machine from the Portal and click the

Capture button. This will begin the process of converting the VM to an Azure

Managed Image.

The wizard will

walk you through creating a new image, ensure that you give it a descriptive

name. The name of the Azure Managed Image will be required when creating your

WVD Host Pool along with it's Resource Group. You will notice the option to

delete the VM once the template has been created, in this example I have chosen

to select this option. However, in production you might want the template in

place so that you can do some online servicing of the image as time goes on.

Once the process

completes you will have a new image available from the Portal. You can view all

images if you search for Images in the global search bar.

Step 5: Create

Windows Virtual Desktop Host Pool from Windows 7 Managed Image

The next step is to

create WVD Host Pool, this can be done by searching for WVD in the poral and

selecting Create.

You must have a

number of infrastructure components in place before you can deploy a WVD Host

Pool. This includes a WVD tenant, AD DS domain, AAD tenant with M365 licenses

with all the associated networking. When you create a WVD Host Pool the

creation must be able to join your WVD Session Hosts to an Active Directory

domain.

From the wizard I

have labelled the Host Pool "win7-personal" and selected the type as

Personal. This outlines that users will maintain a 1:1 mapping with a dedicated

Azure VM running Windows 7, which is created from this template. It is also

possible to create Pool WVD Host Pools but there is limited value in doing this

with Windows 7. By default, Windows 7 can only support 2 concurrent login

sessions. This has been true of all desktop operating systems from Microsoft

until the release of Windows 10 Enterprise Multi-Session, with is designed to

allow pooled desktops to be created from a Windows 10 image.

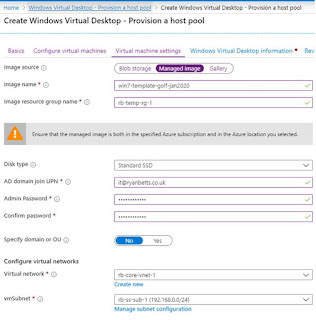

On the Virtual

Machine Settings tab, instead of taking the default of selecting a Gallery

image, click on Managed Image. This will then present two new fields for the

Azure Managed Image name, along with the Resource Group that the image is in.